I've mentioned before that I've gradually moved away from PC gaming in recent years, largely because of how complex it's gotten to keep one's system and games up-to-date.

For a while, video game consoles didn't exhibit many of the issues I have with modern PC gaming... but the frustrations that drove me away from PC gaming are creeping into gaming consoles now.

Is this going to make me quit gaming altogether? Likely not. But is it a disappointing direction that might cause me to play fewer games with every year? Possibly.

Here are some of the worst ways in which console gaming is starting to look and feel a lot like PC gaming in all the wrong ways.

1. Games Are Broken on Day One

If you've been even tangentially keeping up with video games over the last few years, you should be overly familiar with the phrase "day-one patch."

Day-one patches used to be rare. If a game shipped with unforeseen yet glaring issues for a significant chunk of players, the developers would push out a quick update to fix the worst of it.

These day-one patches—which often carried a negative connotation and were reserved for games that were unplayably broken—have now become an expected part of modern games.

Why? Because most games are pressed to disc long before they appear on shelves for sale. Developers keep working on the game long after that—and those changes are applied at release.

2. You Can't Just "Pop In and Play" Anymore

For as long as game cartridges and game discs have been around, gamers have had the ability to pop a new game into their gaming console and start playing right away.

That expectation started disappearing with the advent of the PlayStation 3, and then all but died with the PlayStation 4 and Xbox One generation.

Back on the PlayStation 3, some games (like Metal Gear Solid 4: Guns of the Patriots) came with installers to prevent excessive loading during gameplay. But even then, it wasn't common.

These days, video games are HUGE. Models and assets are massive, and gaming consoles can't read that data off discs fast enough for on-demand play—so games are installed now.

If you only play one game at a time, this isn't so bad. But you can't choose any game from your collection and start playing anymore, which is an advantage consoles used to have over PCs.

3. Games Are Constantly Updating

Back in the olden days, video games were considered finished as soon as they made it onto the cartridge or disc. If there was a glitch or bug, it couldn't be fixed after the fact.

With the Xbox 360 and PlayStation 3 came the ability to patch games long after release. This was tremendously useful for fixing occasional glitches and bugs, and in the case of games like Halo and Call of Duty, it was great for adding multiplayer maps.

But it's gotten out of hand now.

Video games just don't stay still anymore. Even if you never buy extra downloadable content, core games still need to update to prepare for users who do buy DLC. In some cases, these updates do little more than install giant ads for the next game in the series (*cough* Call of Duty: Modern Warfare *cough*).

Not only does this keep you from playing games while they update, but those updates take up valuable space on your drive and they waste bandwidth (which can be costly if you have a monthly cap).

This was bad enough on the PS4 and Xbox One, but with the limited storage on the PS5 and Xbox Series X|S, this severely limits how many games you can have installed at any given time.

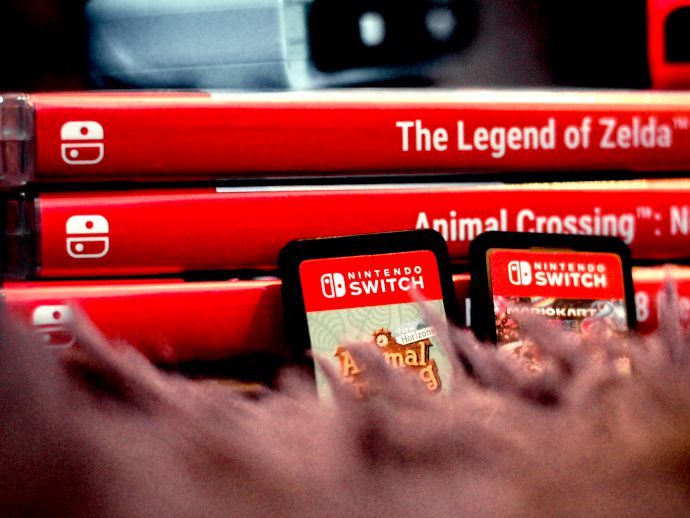

4. Even Nintendo Is Adopting the "PC Style"

Nintendo is famous for shirking modern trends in favor of whatever they feel like doing at any given time. Sometimes this works (e.g. Nintendo Switch) and sometimes it doesn't (e.g. Nintendo Wii U).

With the Nintendo Switch era, Nintendo seems to be taking more of a PC-style approach to everything.

For starters, day-one patches and constant updates are as big a problem for the Nintendo Switch as with any other modern gaming console. Nintendo also adopted an online model similar to Sony and Microsoft, where you need a subscription for online play.

At least the Nintendo Switch still has the ability to play games via Game Cards, which is one area where the Nintendo Switch has an advantage over all-digital discless. But with games being as large as they are, you still need to install games before playing them.

Retro Games Might Be Better Anyway

One reason why older gamers like me tend to think better of vintage video games is pure nostalgia. I happily admit that.

But when you look at the state of modern console gaming, it's easy to see that not every technological advancement has been good for the video gaming landscape.

There were plenty of broken games in the 80s and 90s, of course. And because there was no mechanism for updating them, you were stuck with what you got.

But that also forced developers into making sure they got it right the first time. That's a big part of what made older games so enduring—the developers knew they had to do everything they could to make it count because they only had one shot.